Back to Blog

Speed, Cost, and Ecosystem Coverage: The Next Constraints in AI Security Analysis

April 22, 2026

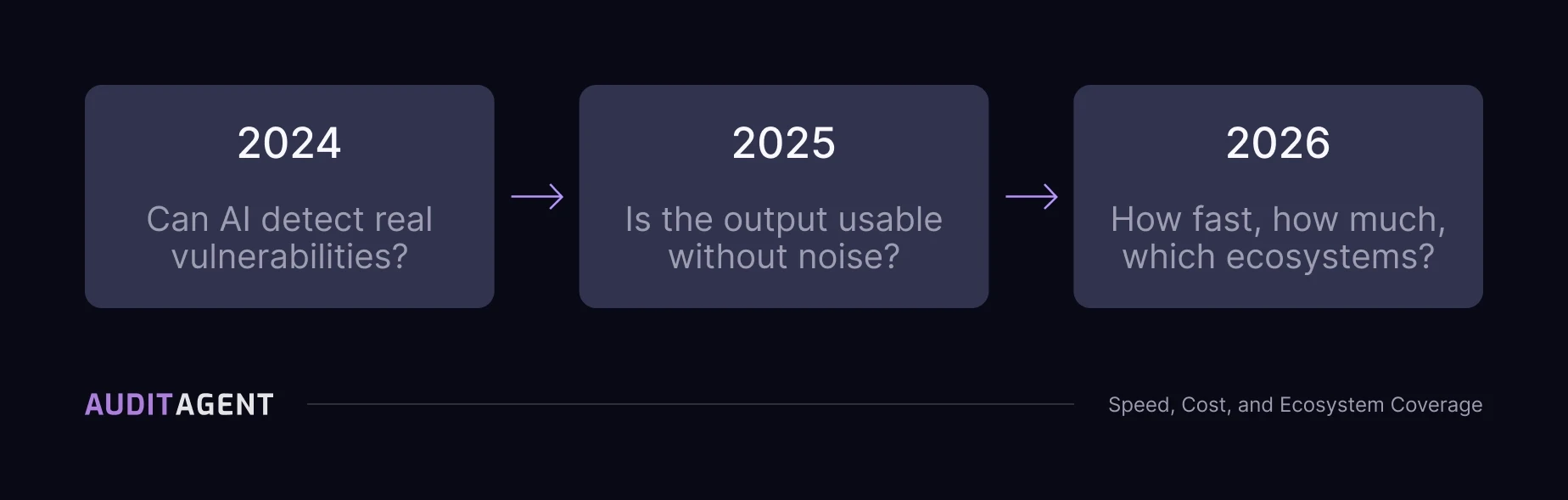

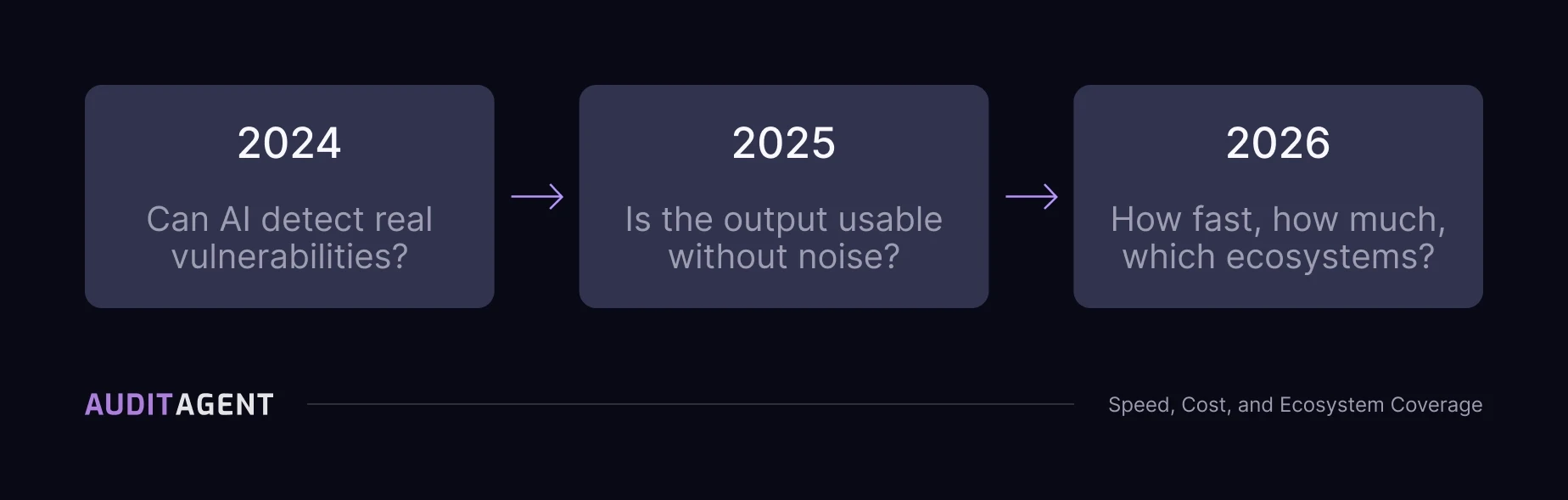

The question shaping AI security tooling has shifted each year. In 2024 it was whether AI could detect real vulnerabilities. In 2025 it was whether the output was usable without excessive noise. In 2026, the constraint is different: how fast analysis runs, what it costs to scale, and how much of the ecosystem it actually covers.

Detection capability is now expected. The constraint is deployment.

How AI Security Tooling Has Evolved (2024 to 2026)

Understanding current constraints requires looking at how teams actually used these systems.

2024: Detection capability

The question was whether AI could detect real vulnerabilities on real codebases. EVMBench provided a standardized way to measure this. Across 40 repositories and 120 high-severity vulnerabilities, AuditAgent detected 80 of 120. The best base model detected 56.

2025: Noise and usability

High recall exposed a second problem. Systems that found a lot also flagged a lot. Teams deploying in production found themselves doing significant triage work before findings were actionable, which shifted the focus toward validation pipelines and precision. This also became visible in competitive audit platforms, where large volumes of low-signal submissions created operational overhead and in some cases led to restrictions on AI-generated findings.

2026: Speed, cost, and scale

With detection and noise addressed to a usable level, constraints shifted to performance and deployment. Latency, cost per analysis, and ecosystem coverage now determine whether these systems are used continuously or only in limited contexts.

Why Latency Matters in Smart Contract Security Analysis

An analysis that takes hours can support a scheduled audit. It does not fit into development workflows that require fast iteration.

As AI security tooling moves into pre-deployment checks and continuous scanning, results need to arrive within a timeframe that developers can act on. A system that can't meet that threshold gets skipped. AuditAgent is designed to run analysis ahead of manual audits, with scan times ranging from a few minutes for CI scans, to under an hour for development use, to several hours for deeper audit preparation. CI scan speeds are already within a range most pipelines can absorb. This supports pre-audit workflows, even if continuous scanning during development remains a latency and cost problem the tooling is still working toward.

Why Cost Determines Adoption of AI Security Tools

Cost determines how widely a system can be applied. A tool that works for a single audit engagement may not scale across ongoing development without cost efficiency built in.

At lower cost, analysis becomes a routine step. At higher cost, it stays reserved for engagements where someone has explicitly budgeted for it. That's a narrower footprint than most teams want. AuditAgent uses a per-line-of-code pricing model, allowing teams to run analysis during development or before audits without committing to a full manual engagement. This enables broader use, even if continuous scanning across all codebases still requires trade-offs in cost.

Why Ecosystem Coverage Matters Beyond Solidity

Most AI security tooling has been built for Solidity and EVM-compatible environments. That reflects where most historical data and vulnerabilities exist.

Other ecosystems now represent a growing portion of deployed value:

Move (Aptos, Sui)

Rust-based contracts (Solana, Near)

Cairo (Starknet)

Solana

Security analysis limited to Solidity does not cover teams deploying across multiple ecosystems. And beyond contracts, real incidents have involved frontend systems, backend infrastructure, deployment scripts, and even Ethereum client implementations, which are often excluded from audit scope. Full coverage requires analysis beyond contract code alone.

How Pre-Audit Analysis Improves Audit Workflows

One current use case is analysis before a manual audit begins. AuditAgent runs against a codebase, identifies known vulnerability patterns, and produces structured findings before auditors engage. The code is cleaner, and documentation improves through generated summaries and diagrams. Less auditor time goes to patterns the system already caught. More goes to deeper analysis of logic and interactions that require human judgment.

The audit remains human-led. It just starts somewhere further along.

What Is AgentArena and How Is It Used

AgentArena runs competitive multi-agent security analysis experiments. Different models and configurations are tested against the same tasks, making tradeoffs between detection capability, noise, speed, and cost visible before changes are applied in production systems. The platform is designed to remain independent and fair across agents, including AuditAgent, allowing teams to evaluate tradeoffs across models before committing to a specific setup in production.

How to Evaluate AI Security Tools in 2026

The relevant questions are:

What is the detection rate?

What is the false positive rate, and is the methodology published?

What is the latency per analysis?

What is the cost at scale?

Which ecosystems are supported?

Does the output integrate into existing workflows?

Benchmark recall answers only part of this, the rest requires either published methodology or direct testing.

The question shaping AI security tooling has shifted each year. In 2024 it was whether AI could detect real vulnerabilities. In 2025 it was whether the output was usable without excessive noise. In 2026, the constraint is different: how fast analysis runs, what it costs to scale, and how much of the ecosystem it actually covers.

Detection capability is now expected. The constraint is deployment.

How AI Security Tooling Has Evolved (2024 to 2026)

Understanding current constraints requires looking at how teams actually used these systems.

2024: Detection capability

The question was whether AI could detect real vulnerabilities on real codebases. EVMBench provided a standardized way to measure this. Across 40 repositories and 120 high-severity vulnerabilities, AuditAgent detected 80 of 120. The best base model detected 56.

2025: Noise and usability

High recall exposed a second problem. Systems that found a lot also flagged a lot. Teams deploying in production found themselves doing significant triage work before findings were actionable, which shifted the focus toward validation pipelines and precision. This also became visible in competitive audit platforms, where large volumes of low-signal submissions created operational overhead and in some cases led to restrictions on AI-generated findings.

2026: Speed, cost, and scale

With detection and noise addressed to a usable level, constraints shifted to performance and deployment. Latency, cost per analysis, and ecosystem coverage now determine whether these systems are used continuously or only in limited contexts.

Why Latency Matters in Smart Contract Security Analysis

An analysis that takes hours can support a scheduled audit. It does not fit into development workflows that require fast iteration.

As AI security tooling moves into pre-deployment checks and continuous scanning, results need to arrive within a timeframe that developers can act on. A system that can't meet that threshold gets skipped. AuditAgent is designed to run analysis ahead of manual audits, with scan times ranging from a few minutes for CI scans, to under an hour for development use, to several hours for deeper audit preparation. CI scan speeds are already within a range most pipelines can absorb. This supports pre-audit workflows, even if continuous scanning during development remains a latency and cost problem the tooling is still working toward.

Why Cost Determines Adoption of AI Security Tools

Cost determines how widely a system can be applied. A tool that works for a single audit engagement may not scale across ongoing development without cost efficiency built in.

At lower cost, analysis becomes a routine step. At higher cost, it stays reserved for engagements where someone has explicitly budgeted for it. That's a narrower footprint than most teams want. AuditAgent uses a per-line-of-code pricing model, allowing teams to run analysis during development or before audits without committing to a full manual engagement. This enables broader use, even if continuous scanning across all codebases still requires trade-offs in cost.

Why Ecosystem Coverage Matters Beyond Solidity

Most AI security tooling has been built for Solidity and EVM-compatible environments. That reflects where most historical data and vulnerabilities exist.

Other ecosystems now represent a growing portion of deployed value:

Move (Aptos, Sui)

Rust-based contracts (Solana, Near)

Cairo (Starknet)

Solana

Security analysis limited to Solidity does not cover teams deploying across multiple ecosystems. And beyond contracts, real incidents have involved frontend systems, backend infrastructure, deployment scripts, and even Ethereum client implementations, which are often excluded from audit scope. Full coverage requires analysis beyond contract code alone.

How Pre-Audit Analysis Improves Audit Workflows

One current use case is analysis before a manual audit begins. AuditAgent runs against a codebase, identifies known vulnerability patterns, and produces structured findings before auditors engage. The code is cleaner, and documentation improves through generated summaries and diagrams. Less auditor time goes to patterns the system already caught. More goes to deeper analysis of logic and interactions that require human judgment.

The audit remains human-led. It just starts somewhere further along.

What Is AgentArena and How Is It Used

AgentArena runs competitive multi-agent security analysis experiments. Different models and configurations are tested against the same tasks, making tradeoffs between detection capability, noise, speed, and cost visible before changes are applied in production systems. The platform is designed to remain independent and fair across agents, including AuditAgent, allowing teams to evaluate tradeoffs across models before committing to a specific setup in production.

How to Evaluate AI Security Tools in 2026

The relevant questions are:

What is the detection rate?

What is the false positive rate, and is the methodology published?

What is the latency per analysis?

What is the cost at scale?

Which ecosystems are supported?

Does the output integrate into existing workflows?

Benchmark recall answers only part of this, the rest requires either published methodology or direct testing.